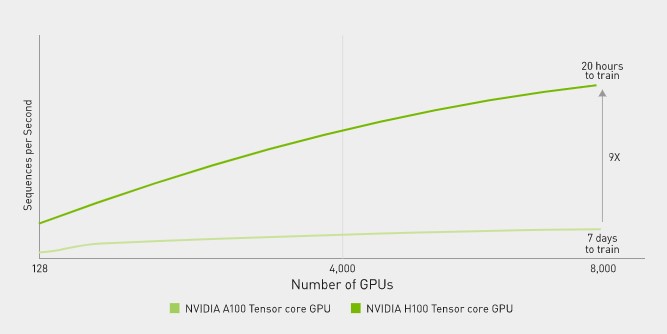

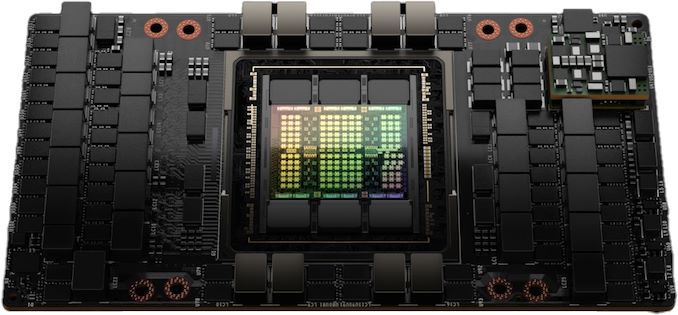

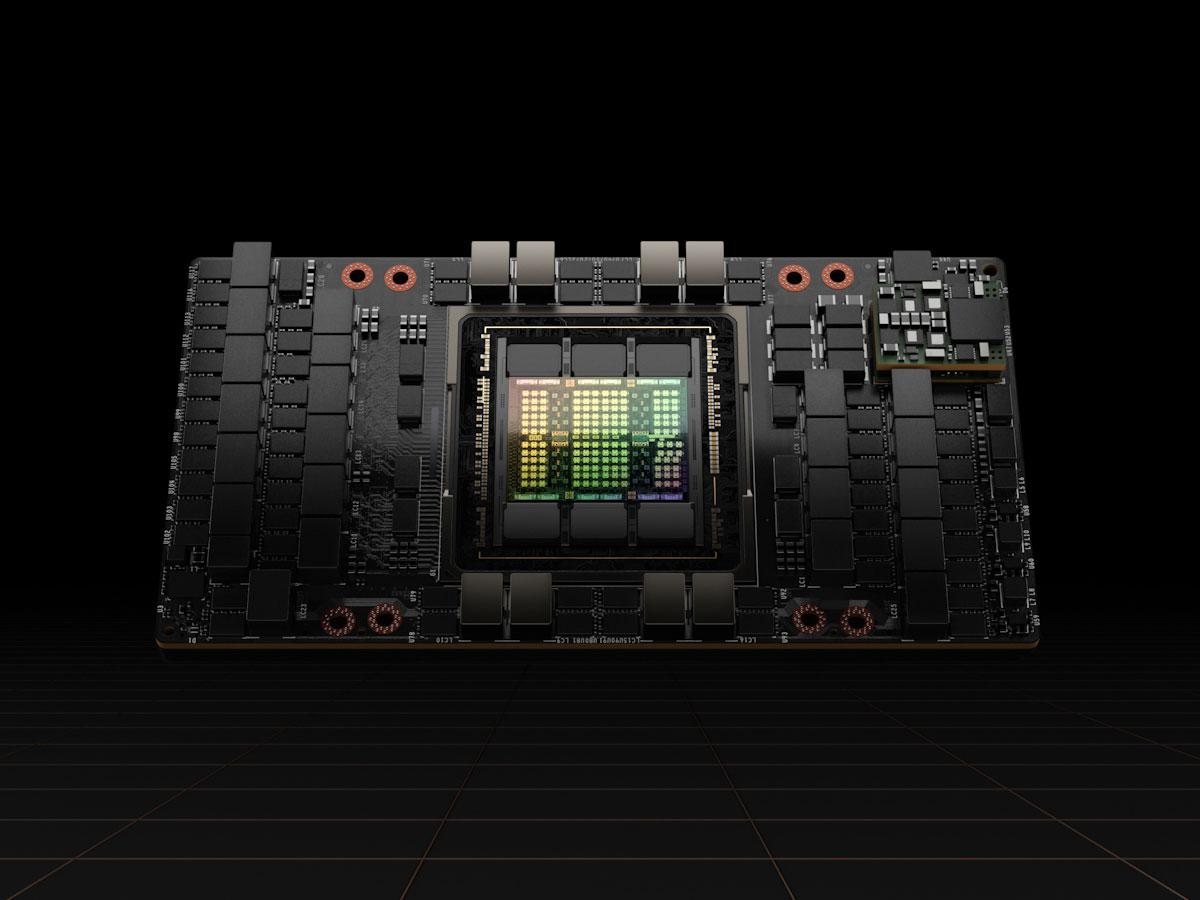

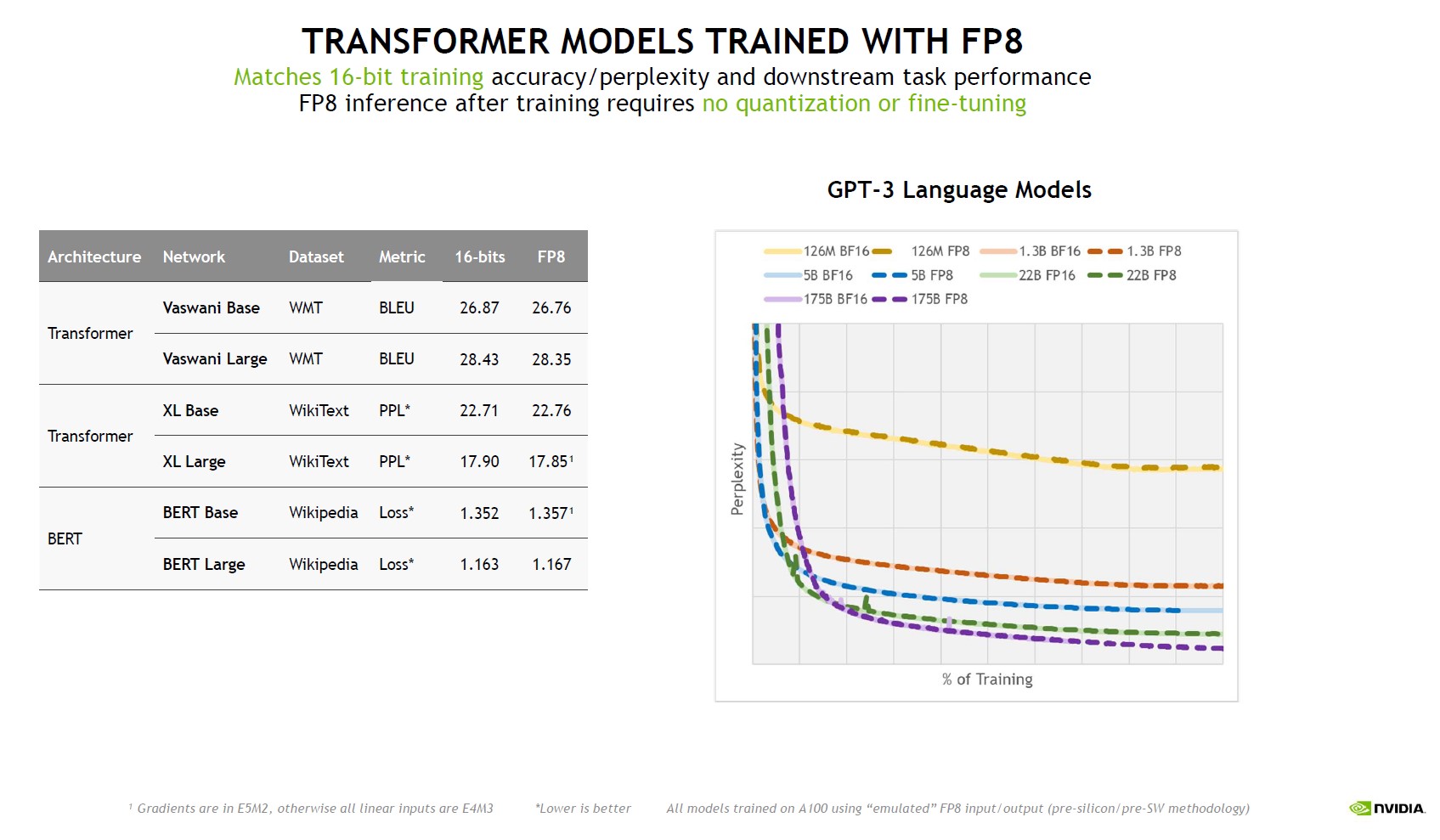

NVIDIA unveils Hopper, its new hardware architecture to transform data centers into AI factories | ZDNET

Fine-tuning GPT-J 6B on Google Colab or Equivalent Desktop or Server GPU | by Mike Ohanu | Better Programming

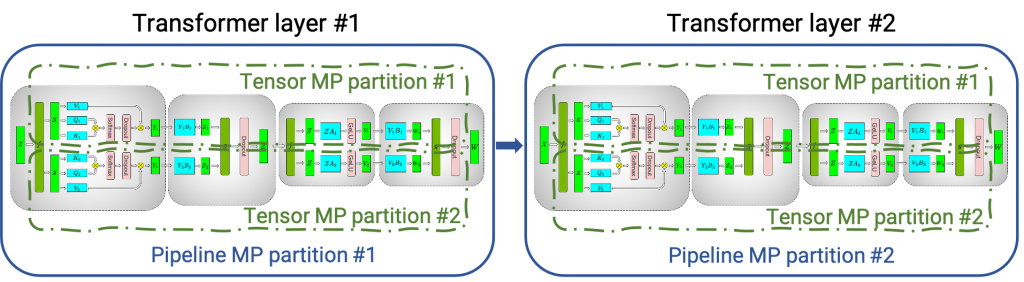

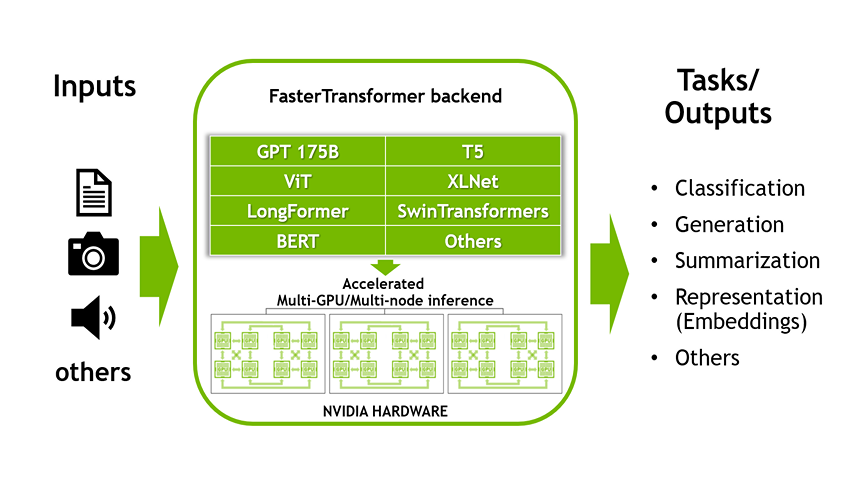

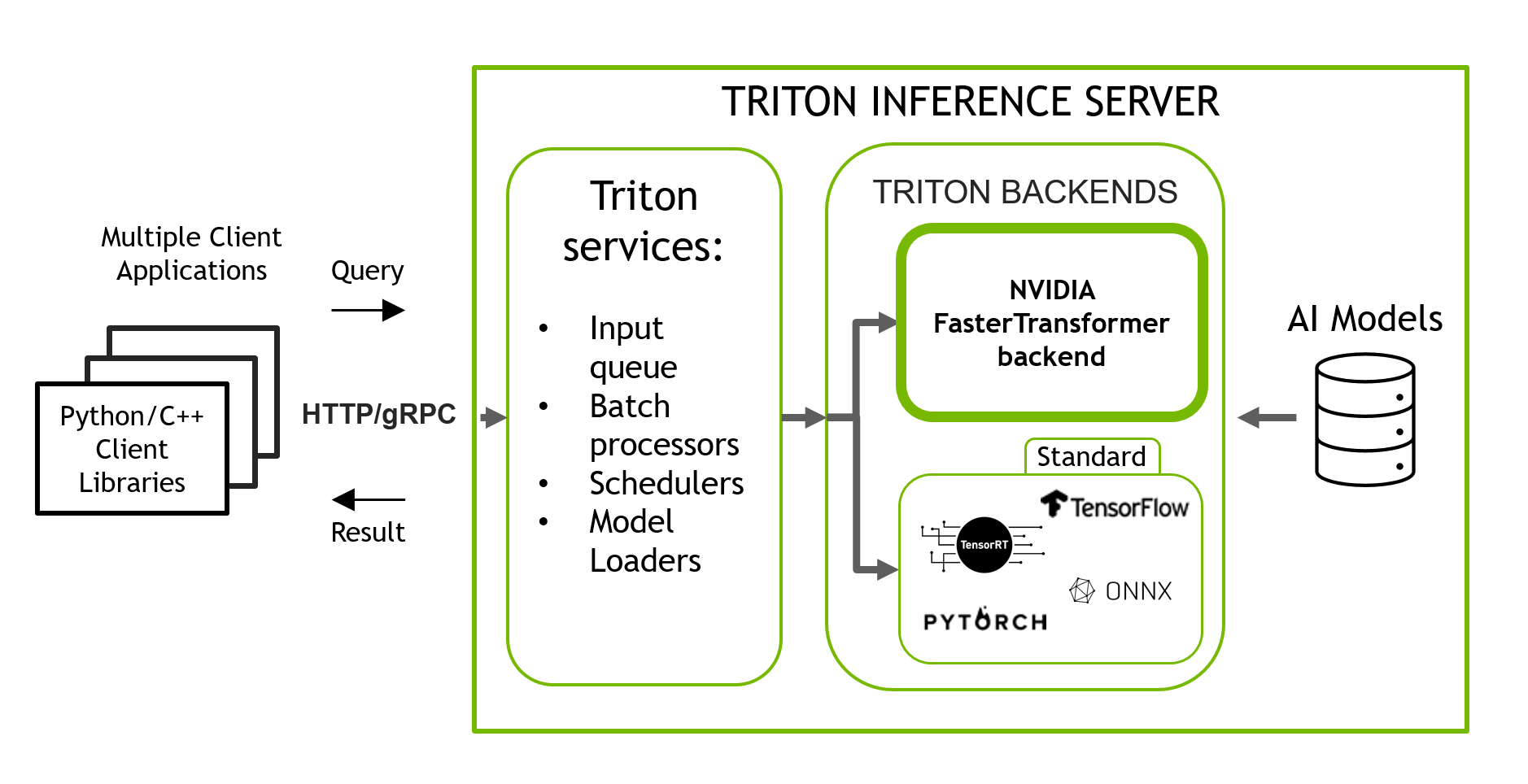

Accelerated Inference for Large Transformer Models Using NVIDIA Triton Inference Server | NVIDIA Technical Blog

Accelerated Inference for Large Transformer Models Using NVIDIA Triton Inference Server | NVIDIA Technical Blog

Accelerated Inference for Large Transformer Models Using NVIDIA Triton Inference Server | NVIDIA Technical Blog

![P] Up to 12X faster GPU inference on Bert, T5 and other transformers with OpenAI Triton kernels : r/MachineLearning P] Up to 12X faster GPU inference on Bert, T5 and other transformers with OpenAI Triton kernels : r/MachineLearning](https://preview.redd.it/p-up-to-12x-faster-gpu-inference-on-bert-t5-and-other-v0-mlo3wvn0d3w91.png?width=2738&format=png&auto=webp&s=8fe6588481fc7f78796c6a421c1d7b0dc34256e3)

P] Up to 12X faster GPU inference on Bert, T5 and other transformers with OpenAI Triton kernels : r/MachineLearning

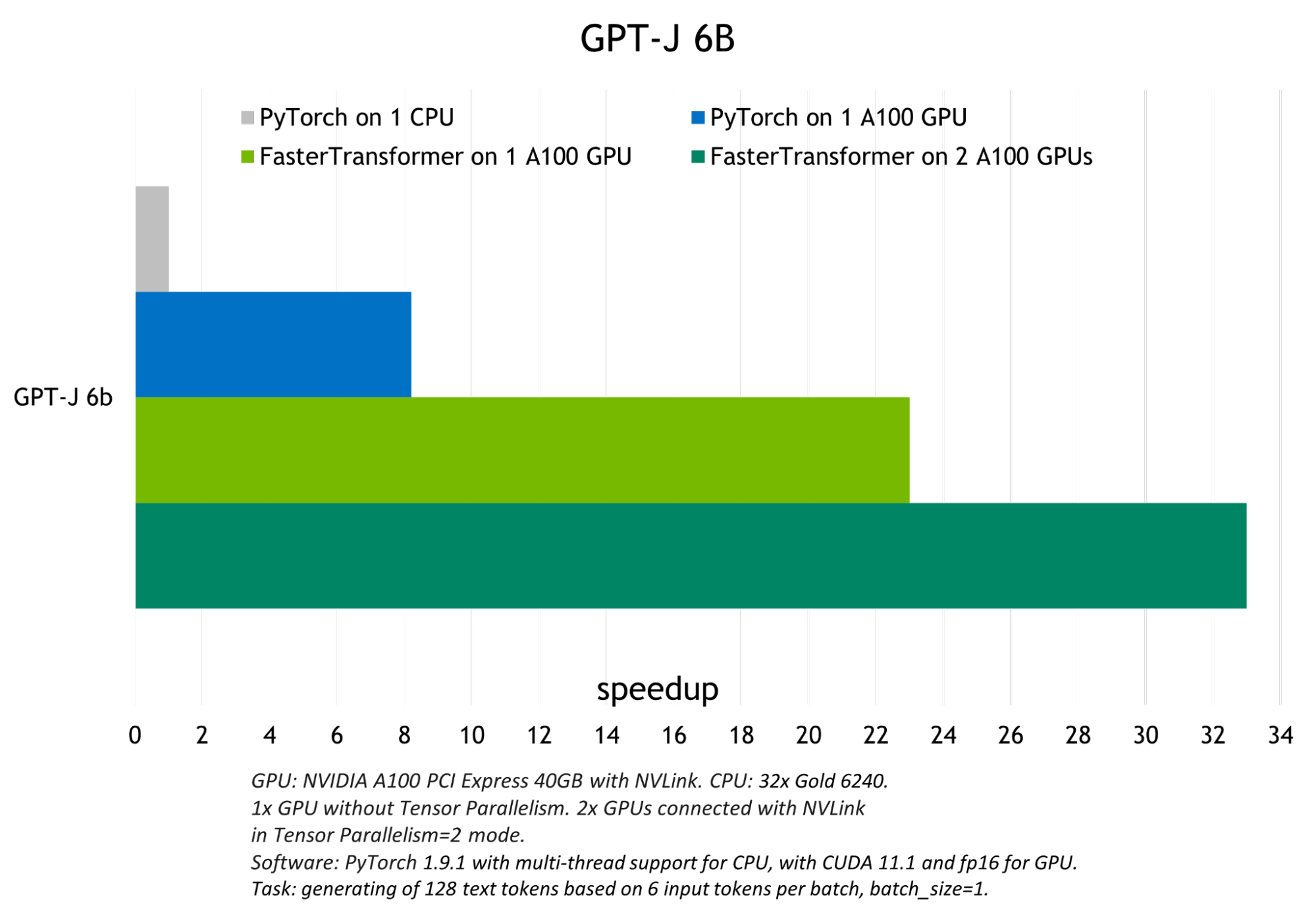

Deploy large models at high performance using FasterTransformer on Amazon SageMaker | AWS Machine Learning Blog

Surpassing NVIDIA FasterTransformer's Inference Performance by 50%, Open Source Project Powers into the Future of Large Models Industrialization | by HPC-AI Tech | Medium

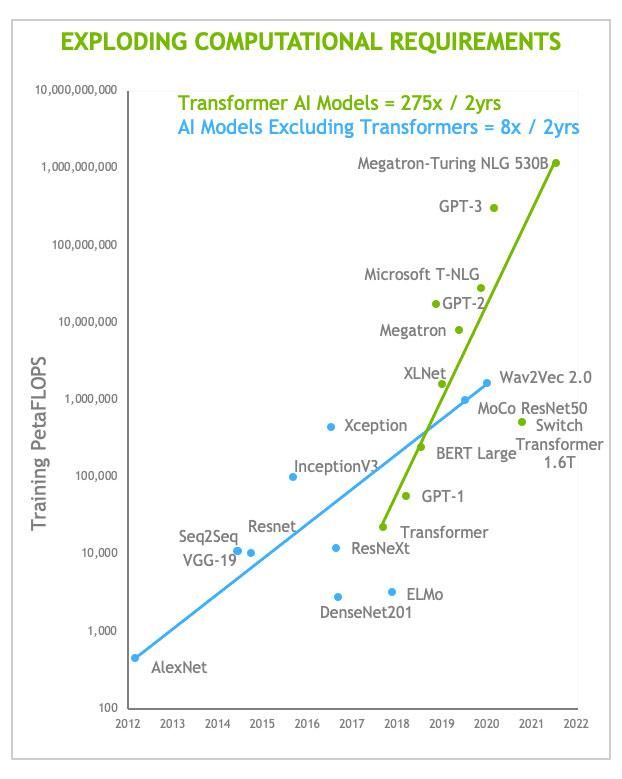

Accelerated Inference for Large Transformer Models Using NVIDIA Triton Inference Server | NVIDIA Technical Blog

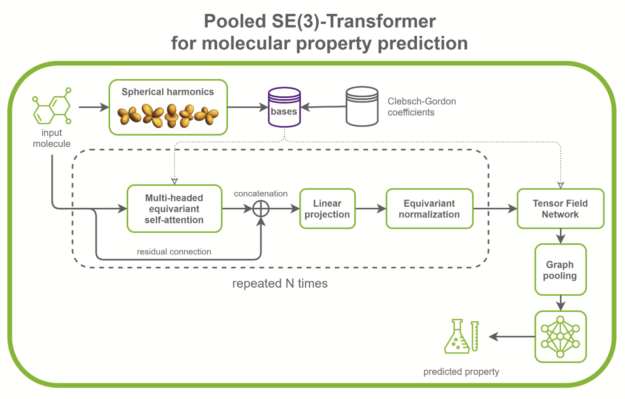

Accelerating SE(3)-Transformers Training Using an NVIDIA Open-Source Model Implementation | NVIDIA Technical Blog